Five point six million dollars. In the world of frontier AI, that is a rounding error. Yet, that is the total sum DeepSeek spent training V3—a model that currently stands toe-to-toe with titans like GPT-4o and Claude 3.5 Sonnet. While the industry has long been obsessed with the “brute force” scaling laws that saw OpenAI spend upwards of $100 million on GPT-4, DeepSeek is proving that architectural brilliance can disrupt the status quo. The next chapter of this efficiency revolution arrives mid-February with DeepSeek V4.

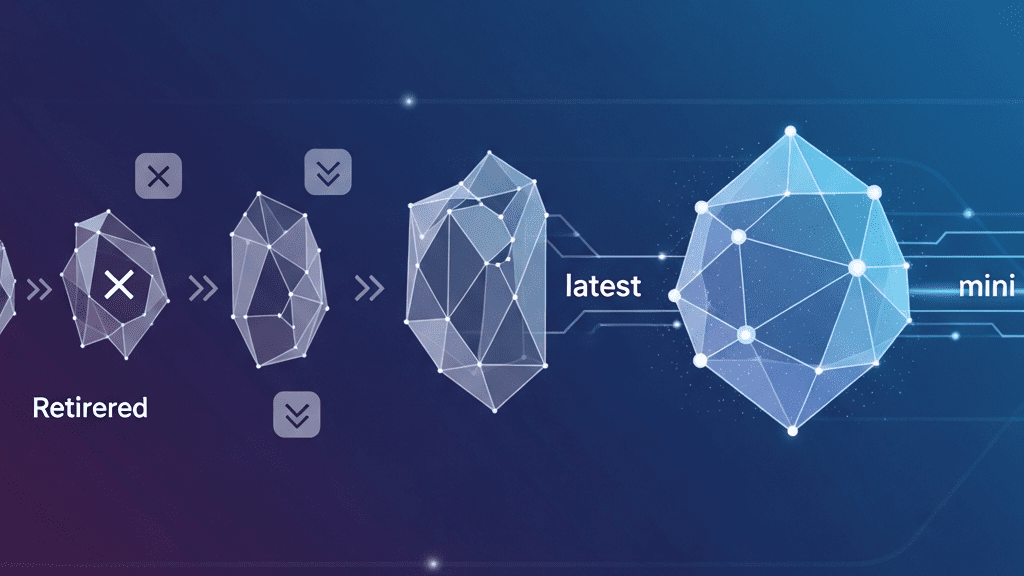

V4 isn’t just another incremental update; it is a 1-trillion parameter behemoth built on a foundation of three radical architectural innovations: Manifold-Constrained Hyper-Connections (mHC), Engram conditional memory, and DeepSeek Sparse Attention. For anyone following the infrastructure side of AI, this represents a pivotal shift. We are moving away from “who has the most GPUs” to “who has the best math”.

The mHC Breakthrough: Stabilizing the Unscalable

The most significant technical leap in V4 is the introduction of Manifold-Constrained Hyper-Connections (mHC). To understand why this matters, we have to look at the “identity mapping” problem. In standard Transformer architectures, residual connections allow information to skip layers, ensuring that gradients can flow through deep networks without vanishing. However, as models grow deeper and more complex, these paths become unstable.

DeepSeek’s founder, Liang Wenfeng, recently signaled the strategic importance of this tech by personally uploading the mHC paper to arXiv. The problem with traditional Hyper-Connections (HC) is that they often cause signal gains to explode—sometimes by over 3000× in a 27B parameter model—leading to catastrophic training divergence. DeepSeek’s solution is as elegant as it is mathematically rigorous:

-

- Birkhoff Polytope Constraints: Instead of allowing arbitrary mixing of residual streams, mHC forces connections to exist within a specific mathematical space—the Birkhoff Polytope.

-

- Sinkhorn-Knopp Integration: By utilizing the Sinkhorn-Knopp algorithm, the model ensures that matrices remain doubly stochastic, preserving signal magnitude throughout the network.

-

- Efficiency Gains: This approach allows for a 4× wider residual stream with a negligible 6.7% increase in training time overhead.

Benchmark Dominance: Efficiency in Action

The data from DeepSeek’s 27B test model is nothing short of spectacular. By applying mHC, the team observed across-the-board improvements in reasoning and problem-solving benchmarks. For instance, the Big Bench Hard (BBH) score jumped from a baseline of 43.8 to 51.0. Similar surges were seen in DROP, GSM8K, and MMLU benchmarks. Analysts are calling this a “striking breakthrough” because it offers a path to frontier-level performance without the linear growth in compute costs typically associated with trillion-parameter models.

The New Era of AI Infrastructure

For data center operators and enterprise AI buyers, the DeepSeek V4 release represents a massive shift in ROI calculations. If V4 can deliver on its promise, it proves that training efficiency can be optimized via software-level architectural constraints rather than just hardware expansion. This “efficiency-first” philosophy suggests that the next generation of LLMs might be defined by their mathematical constraints rather than their electricity bills.

As we look toward the mid-February launch, one thing is clear: the gap between the “scaling-at-all-cost” camp and the “architectural innovation” camp is widening. DeepSeek is betting that innovation wins every time. Stay tuned—the AI landscape is about to get a lot more interesting.

Source: Read the full article here.