For years, the tech world was convinced that the General Purpose Processor (CPU) was destined for a secondary role, overshadowed by the raw parallel power of NVIDIA’s GPUs. However, the 2026 Morgan Stanley Technology, Media & Telecom Conference has officially debunked that narrative. Industry titans Intel and AMD are reporting a massive, unexpected surge in CPU demand, signaling that the ‘brains’ of the data center are back at center stage. As Intel CFO David Zinsner put it: ‘The CPU has become cool again.’

The Orchestration Layer: Why Agentic AI Needs the CPU

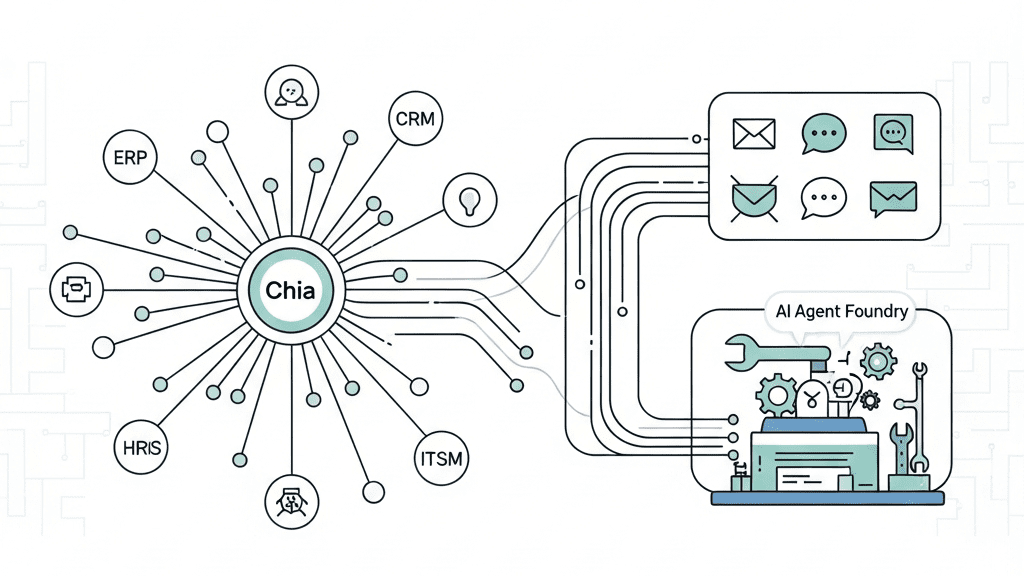

The primary driver behind this shift is the evolution from simple Large Language Models (LLMs) to Agentic AI. Unlike a standard chatbot that simply predicts the next word, an ‘AI Agent’ must observe, reason, plan, and execute tasks autonomously. This ‘agentic workflow’ is computationally complex and requires more than just the brute force of a GPU.

As Zinsner explained, the CPU serves as the ‘Master Orchestrator.’ While GPUs and NPUs (Neural Processing Units) handle the heavy mathematical lifting, the CPU manages the sophisticated logic and data pipelines that allow these accelerators to function. Without a powerful CPU, the most expensive GPU clusters in the world effectively sit idle, waiting for instructions. This architectural necessity is forcing enterprise customers to move away from spot-market buying toward aggressive, long-term supply agreements to guarantee their silicon pipeline.

The Shortage Roadmap: From GPUs to RAM, and Now to Logic

To understand the gravity of the current situation, we must look at the domino effect of the AI boom that began in late 2022. We are currently witnessing the third wave of the AI-driven hardware crisis:

- 2022–2025 (The GPU Era): Massive hoarding of discrete graphics cards by hyperscalers.

- Late 2025 (The Memory & Storage Crunch): A critical shortage of High-Bandwidth Memory (HBM) and enterprise SSDs, which saw prices skyrocket through early 2026.

- 2026 (The CPU Renaissance): A surge in demand for high-end server processors to manage the ‘Inference Flip’—the transition from training models to actually running them at scale.

AMD CEO Lisa Su admitted that this demand has ‘far exceeded’ her expectations. The shortage is already manifesting in regional markets like China, where both ‘Team Blue’ and ‘Team Red’ are struggling to keep server CPUs in stock. This isn’t just a data center problem; we are seeing a parallel spike in the prosumer market, with high-end Mac Studios and Mac minis flying off shelves as developers build local agents using open-source frameworks like OpenClaw and Moltbot.

The Trickle-Down Risk: Will Consumer PCs Survive?

The most concerning aspect for the average user is the convergence of microarchitectures. Because AMD and Intel leverage the same chip designs for both data centers and consumer PCs to maximize factory yields, a data center shortage inevitably puts downward pressure on the consumer market. While Intel and AMD still derive nearly half of their revenue from client PCs, the higher margins in the enterprise sector are a powerful magnet for limited wafer supply.

If current trends hold, the industry is looking at a grim reality: the potential end of the ‘entry-level PC’ by 2028. As consumer-grade memory and storage fight for the same silicon space as high-priced enterprise components, the cost of building a basic computer may soon become prohibitive for the average student or home office worker.

Multiverso Analysis: A Maturing Ecosystem

We are no longer in the ‘wild west’ of AI training. We have entered the era of deployment. This requires a balanced, heterogeneous computing environment where the CPU’s logic is just as vital as the GPU’s power. For the tech industry, the ‘CPU Renaissance’ isn’t just about sales figures; it’s a sign that AI is finally integrating into the core logic of our computing infrastructure. The bottleneck has moved from ‘how much can we calculate’ to ‘how well can we orchestrate.’

Join the Conversation:

Are we prepared for a world where a basic laptop becomes a luxury item due to data center demand? Or will the shift toward local AI agents save the consumer market? Let us know your thoughts in the comments below.

Source: Read the full article here.