March 2026 marks a definitive shift in the history of the web. We have moved past the ‘API Wrapper’ era—where browsers were mere windows to cloud-based LLMs—into the age of Native Multi-Modal Integration. With the latest web runtime updates, the browser is no longer just a content viewer; it has evolved into a high-performance execution engine capable of running trillion-parameter logic locally.

The End of the Cloud Bottleneck: Multi-Modal Runtimes

For years, the ‘AI experience’ was synonymous with latency. Developers had to juggle heavy external APIs for vision, voice, and text, often resulting in disjointed user experiences. The breakthrough we are seeing this month is the native baking of these capabilities into the web runtime itself. By leveraging WebGPU and specialized NPU (Neural Processing Unit) hooks, browsers can now see, hear, and reason with near-zero latency.

This shift is powered by new silicon standards. As seen in the recent launch of AMD’s Ryzen AI 400 Series and Qualcomm’s Dragonwing processors, consumer hardware now delivers up to 77 TOPS (Trillion Operations Per Second) locally. This allows the browser to handle ‘Agentic Workflows’—tasks that observe, plan, and act—without ever sending sensitive user data to a third-party server.

Siri, Gemini, and the Hybrid Intelligence Model

The landscape isn’t just changing for developers; it’s changing for the world’s largest ecosystems. The historic Apple and Google partnership announced this month, integrating Gemini into Siri, highlights a ‘Hybrid Intelligence’ trend. While Apple uses its Private Cloud Compute for heavy lifting, the core interaction happens on-device. This is the blueprint for 2026: Cloud for training, Edge for execution.

Análise Multiverso: Why This Changes Web Engineering Forever

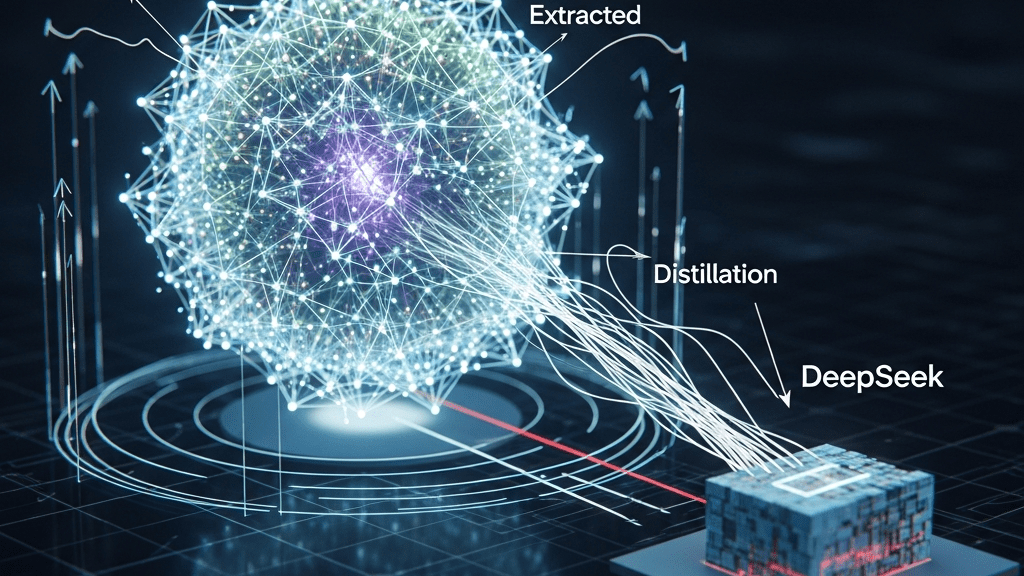

From a technical standpoint, this is the most significant evolution for the TypeScript and WebAssembly communities since the introduction of V8. We are moving toward ‘Local-First AI’. For businesses, this means a 10x reduction in inference costs. Why pay $2.00 per million tokens to a cloud provider when the user’s own hardware can process the request for free?

Key Takeaways for 2026:

- Privacy-by-Default: Processing multimodal data (images, voice) locally is no longer a luxury; it’s a standard.

- Zero Latency: Real-time interaction in healthcare (diagnostic assistance) and manufacturing (acoustic defect detection) is now viable via web interfaces.

- Infrastructure Collapse: Small Language Models (SLMs) are being deployed 3x more often than general LLMs, as they fit perfectly within these new native browser runtimes.

The Big Question: As browsers become self-sufficient AI hubs, will the era of massive SaaS subscriptions for ‘AI features’ collapse in favor of open-source, local-first applications? The infrastructure is ready. Are you?

Source: Read the full article here.