The landscape of artificial intelligence has shifted. We have moved beyond the era of experimental, discrete AI model training and human-facing inference. Today, we are entering the age of the industrial AI factory—always-on environments that continuously transform massive streams of data, power, and silicon into high-order intelligence. These factories are the engines behind the next generation of agentic reasoning, complex multimodal workflows, and the long-context capabilities required for deep research and autonomous business planning.

To meet these demands, the industry requires more than just faster chips; it requires a fundamental architectural evolution. The NVIDIA Rubin platform is that evolution. Designed for a world where the data center—not the individual server—is the unit of compute, Rubin represents a breakthrough in extreme co-design, integrating every layer of the stack to deliver unprecedented performance, efficiency, and reliability.

The Shift to Always-On AI Factories

The constraints of modern AI scaling have evolved. It is no longer enough to optimize for raw FLOPs in isolation. As we move toward agentic AI that can reason across hundreds of thousands of input tokens, we must solve for complex variables: power delivery, thermal management, security, and deployment velocity. The Rubin platform was built to address these specific production constraints, ensuring that intelligence can be produced predictably and cost-effectively at massive scale.

Extreme Co-Design: The Data Center as the Unit of Compute

At the heart of the Rubin platform is the philosophy of extreme co-design. This isn’t just a collection of components; it is a holistic architecture where GPUs, CPUs, networking, security, and software are engineered simultaneously. By treating the entire data center as a single system, Rubin ensures that the performance gains seen in benchmarks translate directly into real-world production. This approach eliminates the traditional bottlenecks found in disaggregated systems, providing a unified foundation for the next decade of AI growth.

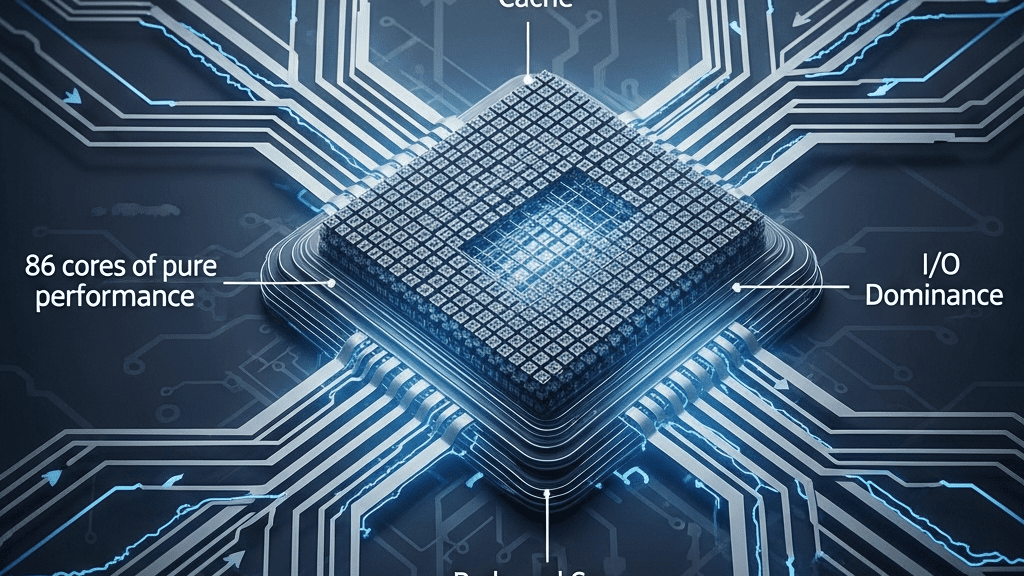

Six New Chips, One AI Supercomputer

The Rubin platform introduces a powerhouse lineup of six new chips that function as a singular, tightly integrated engine. This architecture includes:

- Next-Generation Rubin GPUs: Delivering the massive parallel processing power required for the most demanding LLMs and multimodal models.

- The NVIDIA Vera CPU: Architected to maximize data throughput and complement the high-speed processing of the Rubin GPU.

- Advanced Networking & Infrastructure: New interconnects designed to facilitate the rapid movement of data across the entire fabric of the AI factory.

Together, these chips form the Vera Rubin NVL72, a rack-scale architecture that redefines what is possible in a single deployment footprint.

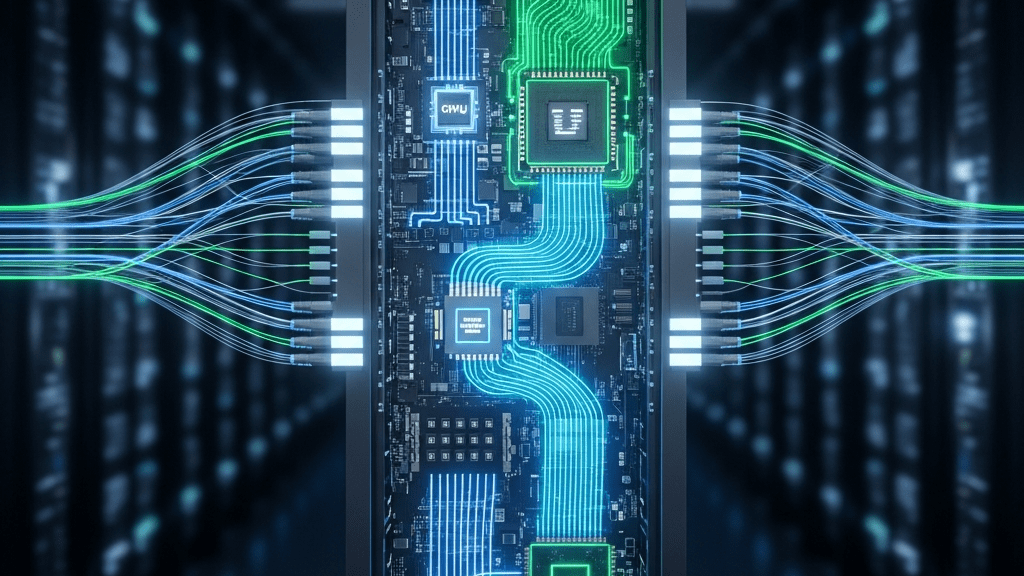

From Chips to Systems: Scaling to the DGX SuperPOD

One of Rubin’s greatest strengths is its scalability. The journey begins with the NVIDIA Vera Rubin superchip, but it doesn’t end there. The platform is designed to scale seamlessly from a single superchip to the NVL72 rack-scale architecture, and ultimately to the NVIDIA DGX SuperPOD scale. This modularity allows organizations to deploy AI factories of virtually any size, maintaining performance consistency and operational simplicity regardless of the scale.

A Programmable Software Backbone

Hardware this powerful requires a software stack that can keep pace. The Rubin platform is fully programmable through the NVIDIA CUDA and CUDA-X libraries, providing developers with a mature, high-performance ecosystem. From training frameworks to real-time inference engines, the Rubin software stack ensures that researchers and engineers can squeeze every drop of performance out of the silicon, enabling faster iteration cycles and quicker time-to-market for AI-driven products.

Performance and Efficiency at Industrial Scale

The ultimate metric of any AI platform is its impact on the bottom line: performance per watt and cost per token. The Rubin platform delivers staggering gains in both categories:

- Training Efficiency: Achieve the same results with one-fourth the number of GPUs compared to previous generations.

- Inference Throughput: Experience up to a 10x increase in inference throughput, enabling real-time reasoning at a fraction of the cost.

- Sustainability: Superior energy efficiency reduces the total cost of ownership and the environmental footprint of large-scale AI operations.

The NVIDIA Rubin platform isn’t just an incremental update—it is the blueprint for the next generation of global intelligence. By unifying silicon, software, and systems, NVIDIA is providing the tools necessary to power the world’s most advanced AI factories.

Source: Read the full article here.