The AI revolution just shifted into a higher gear. Nvidia has officially confirmed that it has begun distributing its first Vera Rubin AI chip samples to key customers, signaling a massive leap forward in high-performance computing infrastructure. This isn’t just a minor iteration; it is a full-stack architectural breakthrough designed to handle the most punishing generative AI and neural network workloads on the planet.

A Unified Powerhouse: CPU, GPU, and Beyond

At the heart of the Vera Rubin platform is a philosophy of total integration. While previous generations focused heavily on raw GPU power, the Vera Rubin architecture treats the entire rack as a single, unified computer. By combining advanced CPU architectures with high-memory GPUs and specialized interconnects, Nvidia is systematically dismantling the data bottlenecks that have traditionally slowed down large-scale AI training and inference.

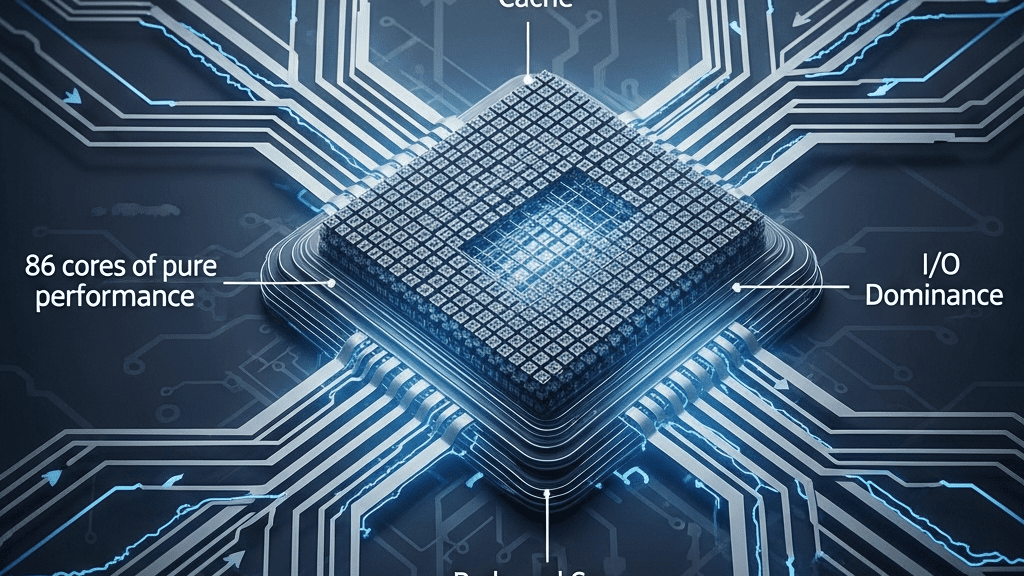

The platform is being delivered via the NVL72 VR200 compute trays—rack-ready systems that come fully assembled with:

- Next-generation CPUs and high-bandwidth memory (HBM).

- Advanced GPU architectures optimized for real-time analytics.

- Integrated storage and networking components.

Next-Gen Interconnects and Networking Dominance

To support the massive scale of modern data centers, Nvidia is leaning heavily into its networking stack. The Vera Rubin platform isn’t just fast; it’s hyper-connected. This hardware release introduces NVLink 6.0 switch ASICs, offering unprecedented bandwidth between components. Furthermore, the inclusion of BlueField-4 DPUs (Data Processing Units) with integrated SSDs ensures that data moves at the speed of light without taxing the primary compute cores.

For large-scale deployments, Nvidia has integrated photonics-based interconnects alongside Spectrum-6 Photonics Ethernet and Quantum-CX9 InfiniBand NICs. This combination provides the scalable connectivity required for massive clusters, ensuring that as data centers grow, performance scales linearly rather than hitting a ceiling.

Strategic Partnerships and the Road Ahead

Industry giants like Foxconn, Quanta, and Supermicro are already in the driver’s seat, utilizing early access to optimize AI software across their respective data center configurations. This early sampling phase is critical, allowing partners to fine-tune environments for data-intensive AI tasks well before the hardware hits mass production.

Nvidia’s Chief Financial Officer, Colette Kress, confirmed that the company remains on track to commence full-scale production shipments in the second half of the year. For enterprises looking to lead in the generative AI space, the arrival of Vera Rubin represents a definitive turning point in what is computationally possible.

Source: Read the full article here.