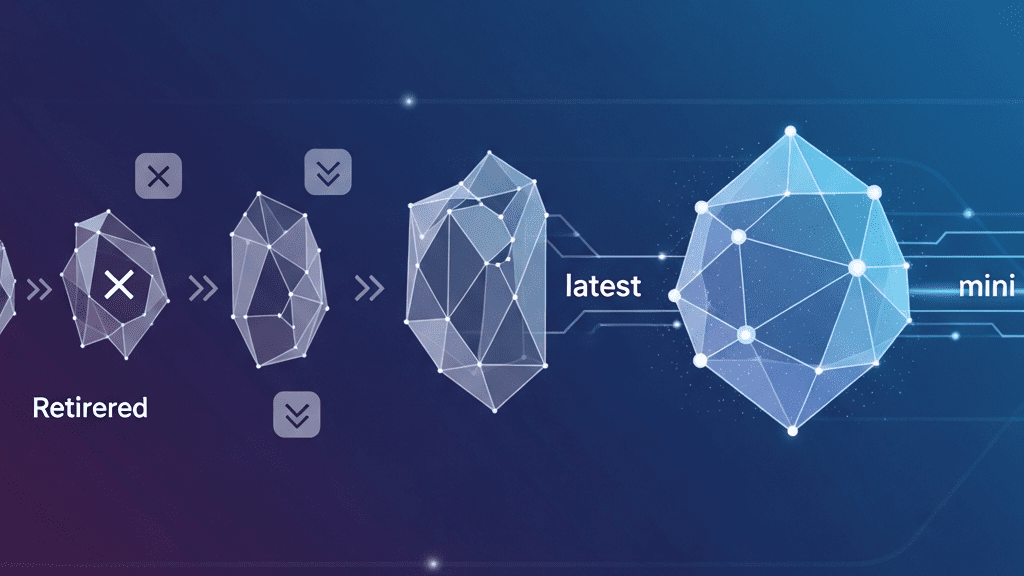

The landscape of Generative AI is moving at a breakneck pace, and as expert developers, we know that staying current is the only way to build high-performance, scalable applications. OpenAI just announced a significant streamlining of their API ecosystem by retiring specific snapshots of GPT-4o, GPT-4, and the venerable GPT-3.5. This isn’t just house-cleaning; it’s a strategic move to optimize the API for better performance and reliability.

The Evolution of the OpenAI Model Ecosystem

As OpenAI pushes the boundaries with the ‘Omni’ era, maintaining a massive legacy fleet of specific model snapshots becomes a bottleneck for innovation. By deprecating older versions, OpenAI can focus resources on the latest, more efficient architectures like GPT-4o and GPT-4o-mini. For us in the dev community, this means more consistent latency, improved throughput, and access to the latest refinements in instruction following and safety.

Key Models Slated for Retirement

While the full list of deprecations is comprehensive, there are a few heavy hitters that most production environments will need to address. Here are the primary snapshots entering the deprecation phase:

- GPT-4o (2024-05-13): The initial snapshot of the flagship Omni model will be retired, with the latest stable version becoming the primary target.

- GPT-4 Snapshots: Older iterations (including specific 0613 and 0314 versions) are being phased out in favor of GPT-4 Turbo and GPT-4o.

- GPT-3.5 Turbo (0613 and 0314): The final transition away from legacy GPT-3.5 models is accelerating, urging developers toward the more cost-effective and capable GPT-4o-mini.

Strategic Migration: Why You Should Act Now

From an architectural standpoint, waiting until the final deprecation date is a high-risk strategy. Migrating early allows for rigorous A/B testing to ensure that output quality and formatting (like JSON mode consistency) remain stable across model versions. Moreover, the newer models typically offer better price-to-performance ratios, meaning a migration could actually lower your operational costs while boosting response times.

Your Migration Checklist

To ensure a seamless transition for your Node.js or Python-based workflows, we recommend the following expert action plan:

- Audit Your Environment Variables: Identify every instance where a hard-coded snapshot (e.g.,

gpt-4-0613) is used. - Update to ‘latest’ or ‘mini’: For most general-purpose tasks, transitioning to

gpt-4o-miniprovides superior results at a fraction of the cost of legacy models. - Run Regression Tests: Use your existing evaluation datasets to compare the outputs of the new snapshots against your current baseline.

- Monitor Logs: Keep a close eye on your API headers for deprecation warnings, which OpenAI provides as a proactive nudge.

The pace of AI development requires us to be agile. By embracing these updates now, we ensure our applications remain at the cutting edge of what is possible with the OpenAI platform. Happy coding!

Source: Read the full article here.